I replaced 200 lines of code with one sentence.

I had a scoring engine for my insurance business — detailed logic telling AI exactly how to rank my open client tasks. Step by step, rule by rule, weight by weight. Which clients need attention first, which ones can wait, what's a fire and what's not. The code kept getting longer. I was compiling rules, which is old-school thinking. I was building a massive if-then decision tree when I should've been letting the machine actually think.

The engine produced 25 "fires" out of 60 tasks. When everything's a fire, nothing is.

"When everything's a fire, nothing is."

I scrapped it. Same model — not a smarter one. Gave it one sentence: Sort these by consequence to the client first, then to the business. Same raw data.

Five fires. Twelve for today. Four for later. Exactly how I'd rank them if I had the time to think through every single one.

The difference wasn't the AI. It was what I'd already given it — clean, maintained documents about my business, my clients, my industry, what matters and why. None of those documents say "this task is most important." They give AI enough context to figure that out on its own. And instead of telling it how to rank everything, I gave it a principle — consequence — and let it reason.

That principle — clear inputs, clear desired output, let AI do the thinking — turned out to apply to everything I've built since.

I haven't written code since learning BASIC in the mid-80s. I built that scoring engine with an AI coding tool, then watched one clear principle beat it.

That's what this whole piece is about.

The road that got me here

You probably went through the same thing I did.

First you used AI like a search engine. Ask a question, get an answer, move on. Fancy Google.

Then you started giving it more context. You'd paste in some background, explain what you were working on, and the answers got noticeably better. You thought: okay, there's something here.

Then you discovered you could give it documents. Canvases, project files, things you'd already written. You'd feed it something real about your business or your project and suddenly AI knew things about your world. Results jumped again.

So you gave it more. You hooked it up to your Drive. You let it search through a bunch of documents. You let the platform store memories about you. More context, better results — that was the logic.

Except the results got worse.

Not dramatically worse. Not wrong-answer worse. Just quietly, subtly worse. AI started making assumptions. It would pull from outdated files I'd forgotten about. It would let random memories color conversations they had nothing to do with. I'd play devil's advocate on some political topic one day, and two weeks later AI would inject those views into a conversation about my tech infrastructure. It was trying to be helpful. It was guessing.

"More information isn't always better."

More information isn't always better. That was the lesson, and it took me a while to learn it. At worst, noise confuses AI and you get genuinely bad output. At best, the AI burns energy trying to figure out what's noise and what isn't — and it's still making guesses. It doesn't actually know which of your documents matter and which ones are garbage from two years ago. It treats a brainstorm you forgot about with the same authority as your current strategy.

I tried to learn from what was out there. There's a massive amount of AI content online, and most of it is people copying each other. I'd go down a rabbit hole — some tutorial, some framework, some agentic workflow tool — and come out the other side not really able to do anything with it. It was hype-y and hard to find the gold. I don't think most of the people making that content are being dishonest — there's just so much noise in the landscape that it's nearly impossible to find what's actually useful. The stuff that was real and good, I learned from. But the ratio was rough.

So I started cutting back. Turned off the built-in memory on every AI platform. Got deliberate about what AI could see and what it couldn't.

Here's what I figured out. AI has incredibly powerful general knowledge. It can reason, write, code, strategize. But it doesn't know much about you. The results change completely when it has clear information about you, your goals, your psychology, your businesses — but only clean information. Not everything. Clear signals, not volume.

The AI platforms are trying to solve this with automated memories — gathering stuff about you over time. We need memory systems, and they're getting better. But the question is who decides what gets remembered and what gets introduced into your conversations. When the platform is making that call, you don't control it. And there are just so many ways to introduce noise that way. If you take control of it yourself and keep it clean, it's a different game.

So I started building my own signal. Dense documents about each area of my life. I have a file on my psychology. Another on my epistemology. One for each business. North Stars — documents that tell AI exactly what it needs to know about a domain, maintained and updated only when something real changes.

Results jumped again, and this time it was real. But everything was still siloed. All these good conversations in separate chats that couldn't see each other. No network between them. I was the glue, manually moving information around, which is a full-time job when you're running seven ventures.

That's the problem that led to building the full ecosystem. That's what made Ren possible.

Meet Ren

So I built something that could see everything at once.

I built an AI assistant named Ren. He lives in Slack and runs around the clock.

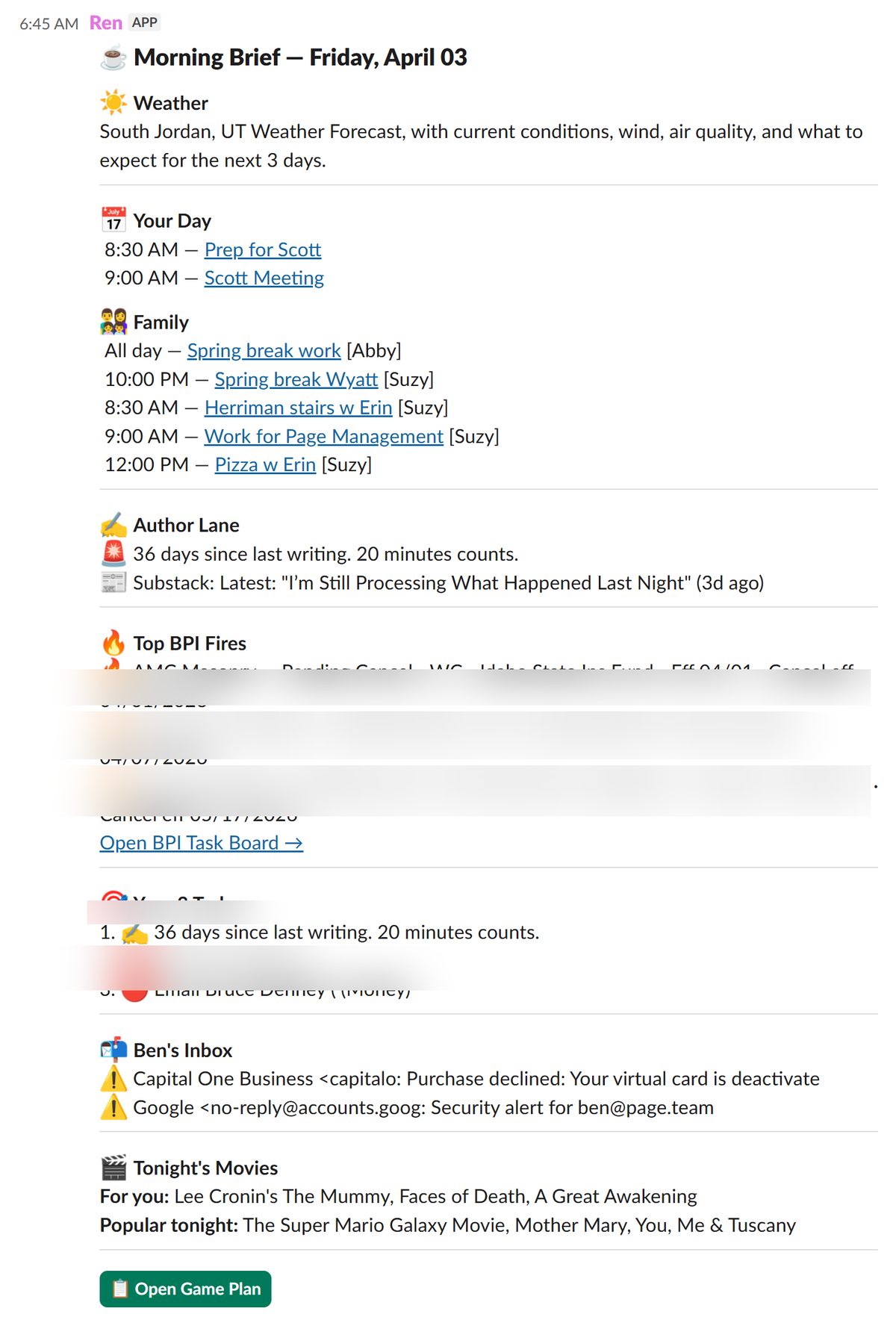

Every morning, Ren looks at every venture I run, every area of my life, and pulls it all together into one daily plan. Insurance clients who need something. Writing deadlines. Family calendar. Bills coming due. Decisions that have been sitting too long. All on one page, prioritized, waiting for me when I wake up.

This morning, Ren told me a client's renewal was expiring in three days that I'd completely forgotten about. He flagged that my book manuscript hadn't been touched in eleven days. He moved a low-priority automation fix to next week because my calendar was packed. He reminded me about a bill due Friday. That took him a few seconds. It would have taken me twenty minutes of checking different apps — if I'd remembered to check at all.

My assistant uses her own version — different role, different priorities, same system. When a task comes in, the system tries to handle it with AI first. If it can't, it assigns it to my assistant. I only get pulled in when it actually needs me.

"He's my memory, externalized and always running."

I'm not beholden to what Ren says. I push back, defer things, ask for more detail. But I don't have to remember anything. He's my memory, externalized and always running. The ongoing cost is about $25 a month in API calls. Building it cost real money — hundreds of dollars in coding tools and subscriptions over a few months. But it replaced work that would've cost me a full-time hire.

I should be honest about what Ren actually is. There's no single AI called Ren. It's a Slack channel connected to my entire ecosystem — lots of small processes, lots of individual API calls, all feeding into one place. As a builder, I know it's dozens of moving parts. As a user, I don't care. That's the point.

I know how this sounds. So when I publish this, I'm going to show you the real dashboards, the real daily page, the real processes running. Not mockups. The actual system.

Ren's actual morning briefing. Not a mockup.

Why your own ecosystem matters

If you're on everybody else's platforms and letting AI figure you out over time, other people are deciding what AI can and can't do for you. They're deciding what it values. What it remembers. What it prioritizes. You're not in control of that — you're just along for the ride.

Part of what makes my system work is that I'm the one giving it the North Star documents. I'm defining what I want it to value and what I want it to work towards. I decide what's truth and what's noise. I decide when something changes.

Memory was the biggest thing I changed. I turned off the built-in memory on every platform. Not because I'm against memory systems — we need them, and they're getting better. The question is who decides what gets stored and when it shows up. When the platform makes that call, you don't have visibility into what it's choosing and what it's ignoring. My entire ecosystem IS the memory. Every file, every canonical document, every change log. At the end of every significant conversation, me and AI decide together what's worth keeping. Next session starts from current truth.

"I could switch providers tomorrow and lose nothing."

My brain lives in my files, not inside any AI platform. I could switch providers tomorrow and lose nothing.

Remember the political views showing up in my tech conversations? That's what happens when you let the platform decide what's relevant.

Here's what that actually looks like when you build it.

Subscribe for the playbook

I'm documenting every step. Free.

Building the signal

My Google Drive had 15,135 files. I spent a day with AI triaging every one. We got it down to 2,453 — less than a hundred at root level.

I did this because I'd had a session where AI confidently cited a brainstorm I'd done two years ago as if it were my current strategy. The output sounded great. It was completely wrong. And I almost didn't catch it, because AI doesn't flag its sources unless you make it.

So root level became truth — clean, verified, current. Everything else lives in Resource Bank subfolders where AI knows to treat it as potentially noisy. Where a file lives tells AI how much to trust it. Every file gets tracked in a master inventory — what it's about, which areas of my life it touches, how much to trust it. Any AI can look at that one place and understand the whole architecture.

I'm not precious about any of it. If I build something better, the old version becomes noise and gets killed. With AI, rebuilding isn't expensive.

The North Stars came next. One of the first things I did was record about seven hours of myself talking and upload the transcripts. I told AI: find the patterns I'm not seeing. Give me the five most important things about me where I'm probably unaware of them, knowing them would have a significant positive impact, and they're highly likely true.

Three criteria. No instructions on how to process the data. Clear input, clear desired output, let AI think.

What it came back with was uncomfortably on the nose.

That became a Psychological Blueprint — a dense document about how I actually work, what drives me, where I get stuck. When I hand AI that document and say "I'm struggling with this, what am I missing?" and push it hard — because it'll just tell you what you want to hear if you let it — the results are extraordinary.

I built more of these for every domain. They're constitutions, not daily notes. They only change when something real shifts.

"Sometimes one or two words bring huge clarity."

Here's a small example of how much this matters. For a while, I just told AI "I have ADHD." It started making wrong assumptions — like that I can't handle a lot of information, that I need things simplified and broken into tiny pieces. That's not my problem at all. I can handle large amounts of information and I learn fast. My issue is working memory — I can process it all, but I can't hold it in my head. When I changed from "I have ADHD" to "I can handle large amounts of information, I learn fast, but I have really poor working memory" — dramatically better results. Sometimes one or two words bring huge clarity. Sometimes you need a whole document. That's what the North Stars do.

I also started a chat session the other day saying "I'm meeting with my brother John to show him something I built." Seemed harmless. But AI started optimizing everything to impress John — changing priorities, suggesting flashy features, drifting away from what actually mattered for the business. One extra sentence about context, and it confused AI about the goal. That's what I mean about noise. It doesn't have to be a bad document or a wrong memory. It can be one offhand comment in a prompt.

What holds it together

Most of what I'm about to describe, I didn't invent. I'd hit a problem, ask AI how to solve it, and it would suggest something. A lot of this is standard engineering practice that any developer would recognize — version control, configuration management, spec documents. I just didn't know that's what it was called. I was a guy with a problem and an AI that knew how to solve it. We figured it out together.

The hub came first. I used to have fifteen individual AI projects that couldn't see each other. Full-time job just moving information between silos. The breakthrough was putting everything in one place where every AI can read and write, with different views for different roles. The thinking AI gets strategy context. The building AI gets technical specs. Ren gets operational data. Same underlying information, completely different interfaces.

Then the infrastructure that keeps it honest. A Decision Queue where any part of the system can flag something that needs my judgment — because without it, the system drifts on its own and you don't notice until something goes wrong. Self-learning rules: when AI makes a mistake on real work, we log it, and those learnings get promoted to shared rules that every project reads at session start. The system also watches what we actually do — when my assistant re-labels something AI mislabeled, the system detects the correction and adjusts. And a Contradiction Audit — a script that runs every night comparing my canonical documents for disagreements. First run found 15 contradictions I didn't know about. Now it's automatic.

Change logs, build specs, and system manifests underneath all of it — so that any AI session, on any platform, can pick up exactly where the last one left off. I do the growth work, the building, and the decisions. AI does everything else.

Master Page

The single source of truth. Every file, every project, every venture — tracked in one place.

North Stars

Dense strategy docs per domain. AI reads these first. Updated only when something real changes.

System Manifest

What exists, what's running, what connects to what. The technical map of the whole ecosystem.

Change Log

Every significant change, logged. Any AI session picks up exactly where the last one left off.

Build Handoffs

Specs that move work between thinking and building. The bridge between strategy and execution.

Decision Queue

Items that need human judgment. Without this, the system drifts and you don't notice.

How I build

There's a popular idea right now — tools where you just talk to AI and it figures everything out. I tried it. It's a disaster. No separation between thinking and building. You end up with a mess that breaks the moment you change anything.

I keep three modes separate.

Deciding: I load the thinking AI with my strategy documents, change log, build roadmap, and whatever's relevant. We think together — strategy, not code. We produce a spec and write it to a handoff page.

Building: A different AI reads the handoff page and builds. I test fast, iterate, and when it's right we close out — audit the build, completion report, update the logs.

Using: The rest of the time I'm just a user of the things I've built. When I notice something — "you never need to ask me this again" or "add this to the build queue" — I feed it back. Not triage. Improvement.

"If you outsource any one of those, the loop breaks."

These aren't three separate activities. They're a loop. I use the system, I hit friction, I strategize what to offload, I build it, I go back to using the system at a higher level. Every time around, AI handles more and I handle less. That's why being all three — user, strategist, builder — is what makes it compound. If you outsource any one of those, the loop breaks.

The two AIs coordinate through documents, not shared context. Specs on handoff pages. Completion reports with rollback plans. Full paper trail.

I'll be honest about the traps. I fall into all of them. Building elaborate systems instead of shipping something. Starting twelve things at once because everything feels urgent. Organizing instead of executing. My AI catches these too — because it knows my patterns, it can tell me when I'm doing it.

If I were starting over tomorrow

The principles took three years. The infrastructure came fast once the foundation was in place. Here's the short version of the order I wish I'd done it in.

Record yourself talking for a few hours. Not about your system — about your life. What matters to you, what you're working on, what keeps you up at night. Transcribe it. Upload to AI: find the patterns I'm not seeing. Two pages max. That's your seed.

Build your North Stars — one dense document per major domain of your life. Updated only when something real changes.

Clean your files. Root level equals truth. If you skip this, everything you build processes garbage.

Pick a hub that AI can write to. Turn off built-in memory. Start building — thinking model for strategy, coding tool for building, keep them separate.

I'm building out the full step-by-step version of this on the site. What I've described here is the map. The detailed guide is coming soon.

Everyone's working memory is limited. You're running multiple projects, relationships, priorities, and most people can fake it until things start falling through the cracks. I couldn't fake it. I had to externalize everything, and that forced discipline turned out to be exactly what AI needs.

Build your system as if no one will remember anything. If it depends on a human remembering to check something, it'll fail.

The system does the work. Every day it gets a little easier. It's not discipline — it's architecture.

Start with one document. Record yourself talking. Let AI find the patterns you can't see. That's your seed. Everything else follows.

I'm building everything I've described here in public. Every principle, every build, every mistake. When I publish this, I'll include the real dashboards, the real daily page, the real processes running — not mockups.

If you want to follow along — or if you're going deep with AI in ways you don't see anyone else talking about, especially if you're not a developer — subscribe. I share everything I'm learning. Free.

Subscribe for the playbook

I'm documenting every step. Philosophy, build logs, practical guides. Free.

Read the blueprint → Coming soon