Every morning, my AI wakes up before I do.

By the time I open my eyes, it's already reviewed my business, my finances, my writing projects, my family calendar — and built my plan for the day. This morning it caught a client renewal expiring in three days that I'd completely forgotten about. It flagged that my book manuscript hadn't been touched in eleven days. It moved a low-priority task to next week because my calendar was packed.

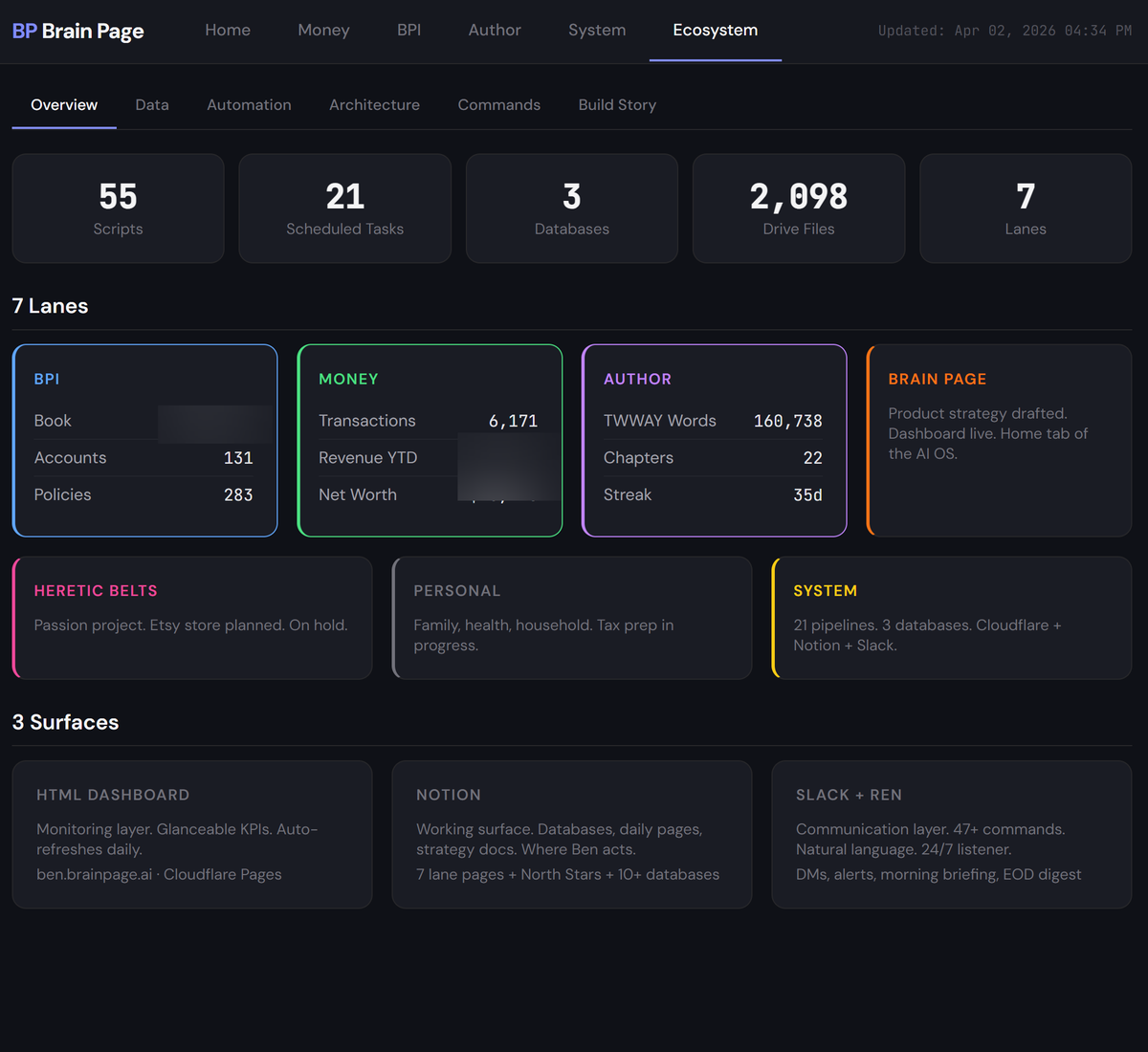

The AI that runs all of this knows my values, my goals, how I work, where my weaknesses are. It knows I tend to overbuild things, that I hyperfocus, that I have poor working memory. It balances everything across seven areas of my life — not with rules I programmed, but because it has a complete picture of who I am and what matters. The whole system runs for pennies a day. I haven't written code since the 1980s.

I'm Ben Page. I run an insurance agency in Utah, and I've spent three years building an AI system that actually runs my life — not just answers my questions. This is where I share everything I'm learning.

What I've built

I didn't set out to build a system. I set out to stop drowning.

I have ADHD. Huge task lists are overwhelming. I was jumping between apps, losing track of conversations, forgetting follow-ups. I tried Front App for omni-channel communication — $250 a month. I tried every task manager, every "second brain" tool. They all felt like more work to maintain than the work they were supposed to organize.

So I built my own thing. Not a second brain — a personal assistant. The difference is that a second brain is something you run. This runs itself.

Now every call I take gets transcribed and summarized by AI that actually understands my business — not generic summaries, but exactly the information I need, organized the way I need it. Every text, every email, every voicemail gets triaged automatically. My finances are tracked without QuickBooks. My daily plan writes itself. And every bit of it cost less to build and run than a single month of the software I replaced.

The actual system. Not a mockup.

Not a second brain. A personal assistant.

I tried the "second brain" thing for years. Notion. Obsidian. Elaborate systems that were impressive to set up and impossible to maintain. The dirty secret of every second brain is that it depends on YOU remembering to use it.

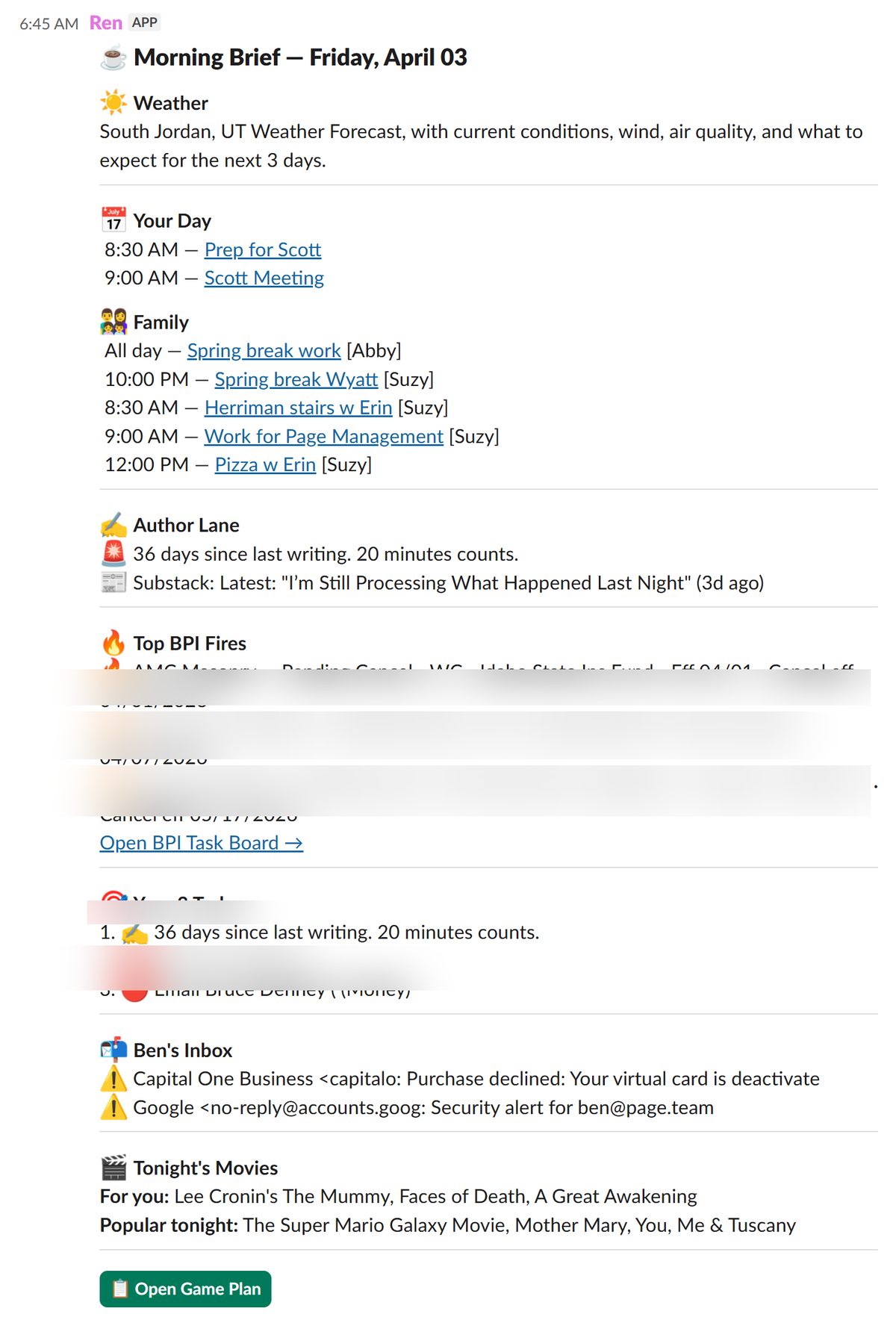

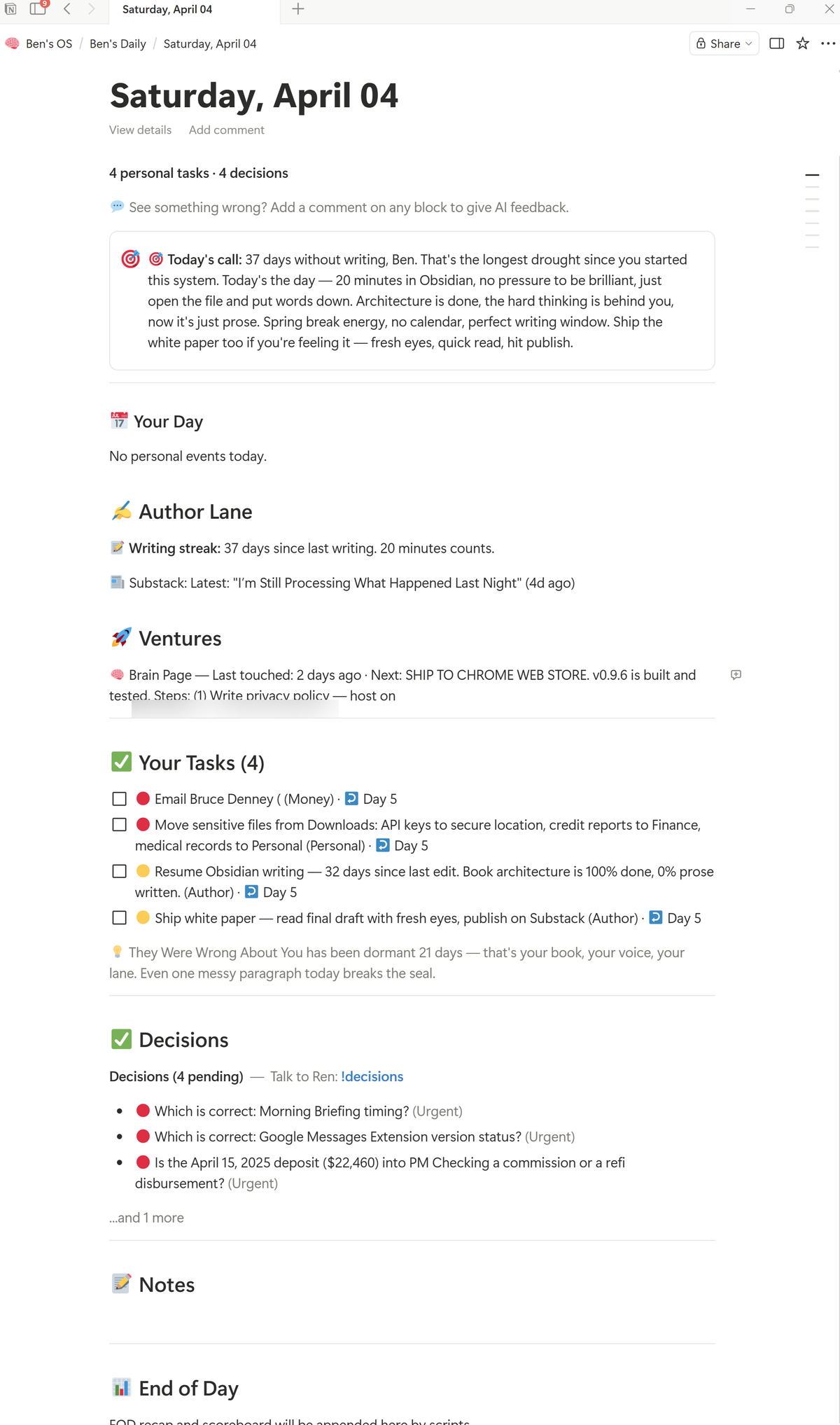

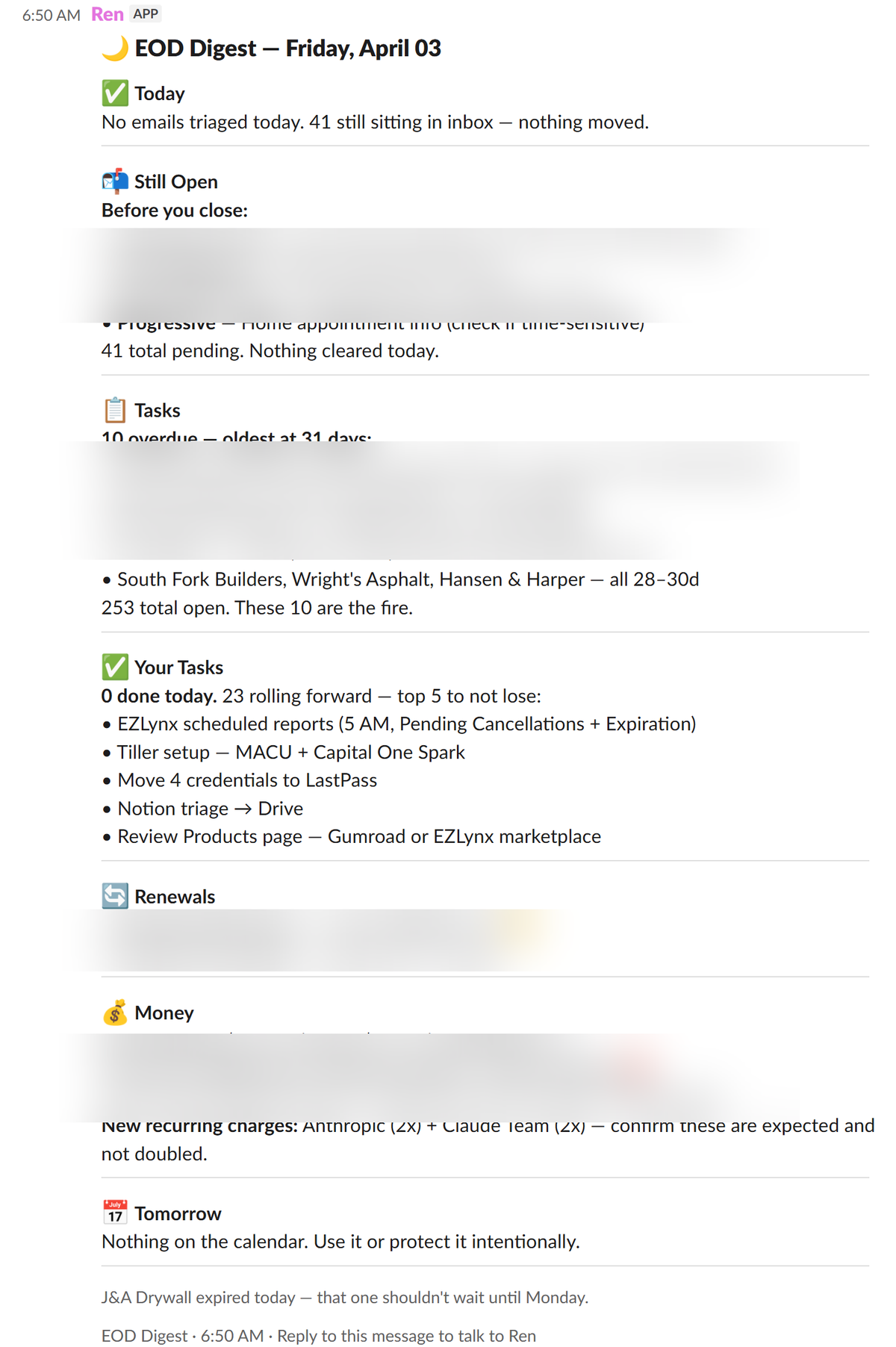

What I've built is different. I have an AI assistant named Ren. He lives in Slack — on my phone and my desktop — and he runs around the clock. Every morning he builds my plan for the day. I follow the plan. Every evening he closes the loop: here's what happened, what's still open, what needs you tomorrow.

But the most powerful part isn't the morning or the evening. It's that Ren is there with me all day long. He's like Alfred to Batman. I can ask him anything about my business, my finances, my schedule, my clients — and he knows, because he's connected to my entire ecosystem. Every document, every database, every venture.

"Create a proposal for this client, here's what I want." He cranks it out. He knows where the files are, what the client history looks like, what format I use. "Add a Google contact." "What's the status on that renewal?" "Update the change log." Done.

I don't have to organize anything throughout the day. I drop stuff everywhere — a quick note, a phone call comes in, texts arrive, new emails hit the inbox — and all of it gets handled. Triaged, categorized, routed. I don't have to remember to save anything or file anything. Ren's organizing in the background while I'm working.

He also asks me questions throughout the day — proactively surfacing decisions I need to make, things I'm forgetting, patterns I'm not seeing. Think less "filing cabinet" and more "chief of staff who never sleeps."

Why be more than just a user?

Most people use AI like Google. Ask a question, get an answer, move on. That's fine. But you'll never get what I just described that way.

The reason my system knows me — really knows me — is because I don't just use it. I also strategize what to build next, and then I build it. The speed of iteration is insane. I do a little bit of a build every single day. Something in my system gets smarter every day — the way it triages my email, what it knows about my values, how it scores my priorities, what it catches that I would have missed.

And it's not just me figuring out what to build. The AI is smart enough to suggest improvements on its own. Throughout the day: "Hey, maybe we should add this to the build roadmap." "This process could be automated." "You've done this manually three times — want me to build it?" I have a build roadmap, and AI helps me maintain it.

At night, it goes further. Ren runs a full inventory of my entire ecosystem — every file, every database, every document. He looks for discrepancies, contradictions, things that are out of date. If he can fix it, he does. If it needs human judgment, it goes on my decision queue — and that becomes part of tomorrow's daily plan.

I'm riding a wave of constant improvement. Every cycle, I offload more to AI and operate at a higher level. That's the flywheel:

Use: You live in the system. Daily game plan, Ren in Slack, everything pushed to you. You hit friction — "this should be automated" or "Ren should know this."

Strategize: You take that friction to a thinking AI. You provide clarity — clean documents, honest context, real priorities. Together you decide what to build next and write the spec.

Build: A building AI reads the spec and ships it. You test, iterate, deploy. The system gets smarter. You go back to using it at a higher level.

Every revolution, AI handles more and you handle less. The system compounds because it knows you better every day — not from automated memories, but from the clear, maintained documents you've built. Outsource any one of the three roles, and the loop breaks.

I share everything I'm learning. Free.

What's working, what isn't, and how to build your own. No hype. No spam.

What three years taught me

Your files are code

Every document AI reads is an instruction it's executing against. A messy Google Drive is a messy codebase. Fix the inputs, and everything downstream changes. I went from 15,135 files to 2,453. Less than a hundred at root level. That's when things got real.

Read the full story →Clarity beats complexity

I replaced 200 lines of scoring logic with one sentence: sort these by consequence. Same AI model. Dramatically better results. The lesson applies to everything: clear inputs, clear desired output, let AI do the thinking. Stop telling it how to think — tell it what you want.

Read the full story →The system IS the memory

I turned off built-in memory on every AI platform. My files, my documents, my ecosystem — that's the memory. Delete every chat today, lose nothing. Switch AI providers tomorrow, lose nothing. The brain lives in your files, not inside any AI.

Read the full story →What I'm working on this week

Updated April 2026

- 01 Publishing the full manifesto. Three years of lessons in one 20-minute read. Read it now →

- 02 Building a Chrome extension for managing text messages through AI. First Brain Page product.

- 03 Documenting everything on Substack. Build logs, philosophy deep-dives, and practical guides.

Subscribe for the playbook

I'm documenting every step. Philosophy, build logs, practical guides. Free.